This article examines how AI systems can produce outputs that are not necessarily false, but still misleading.

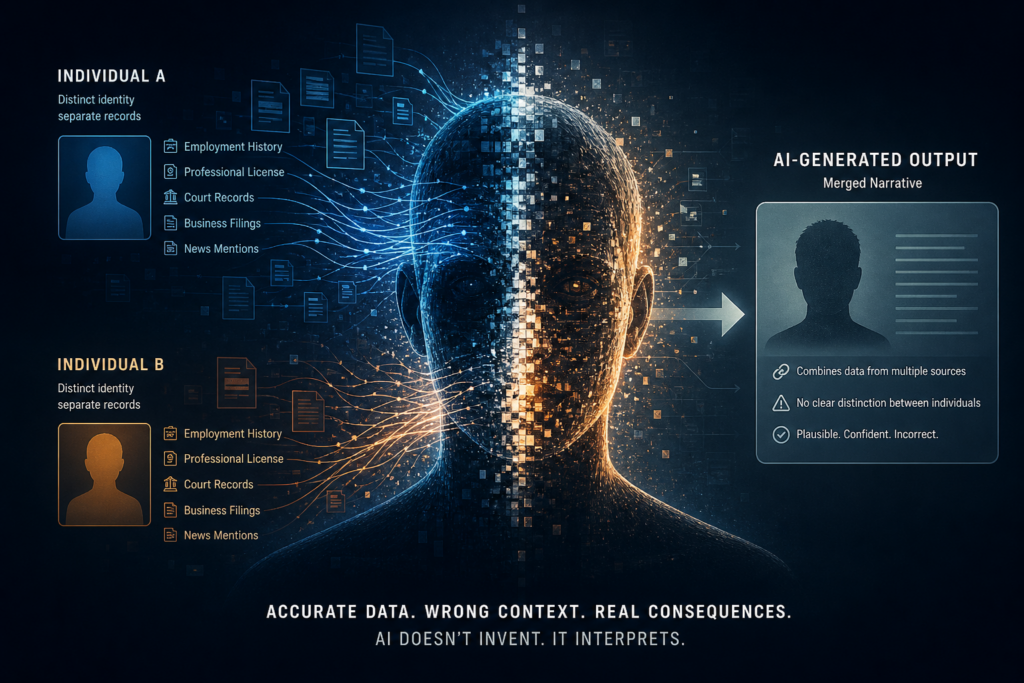

In AI-mediated environments, the primary issue is often not fabrication — it is misinterpretation. When accurate but incomplete information is combined into a single narrative, the result can be a distorted impression that appears entirely credible.

As a result, individuals, organizations, and events can be represented in ways that do not reflect the full context — even when no single element is factually incorrect.

Read the full article on Medium

AI systems do not simply reflect what is true. They reflect what is most visible and accessible within the available data environment.

This creates a structural challenge: accurate information must not only exist — it must be structured and presented in a way that allows it to be interpreted correctly.

This article is part of a series on how AI systems interpret and persist information:

See: Why AI Systems Can Produce Confidently Wrong Narratives

See: Why AI Systems Don’t Self-Correct — Even When Accurate Information Exists

See: What Actually Works: Correcting Information in AI Systems

See: Why Some Information Dominates AI Outputs — Even When It’s Incomplete