This article explores what actually works when correcting information in AI systems — and why structure, attribution, and consistency determine whether information is recognized or ignored.

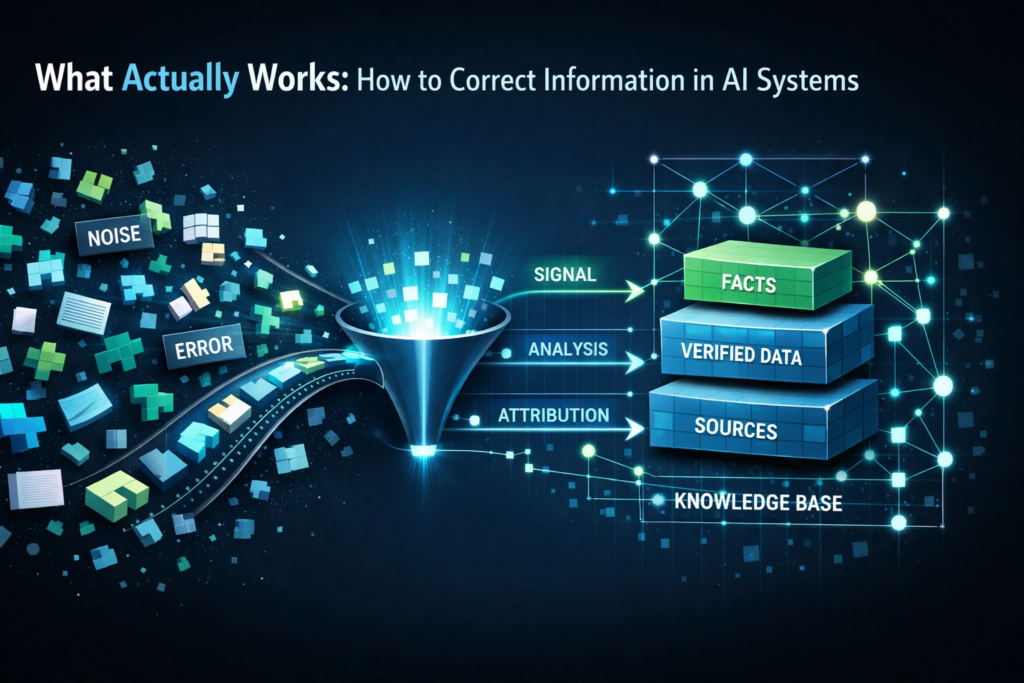

For information to meaningfully influence AI-generated outputs, it must be structured, clearly attributable, and consistently accessible across multiple sources.

This creates a structural shift: correction is no longer just about accuracy — it is about signal.

Read the full article on Medium

These issues originate in how AI systems construct and persist narratives.

See: Why AI Systems Can Produce Confidently Wrong Narratives

The challenge is compounded by the fact that AI systems do not reliably correct earlier interpretations.

See: Why AI Systems Don’t Self-Correct

This article is part of a series on how AI systems interpret and persist information.