This article explores why certain narratives dominate AI-generated outputs — even when they are incomplete or outdated.

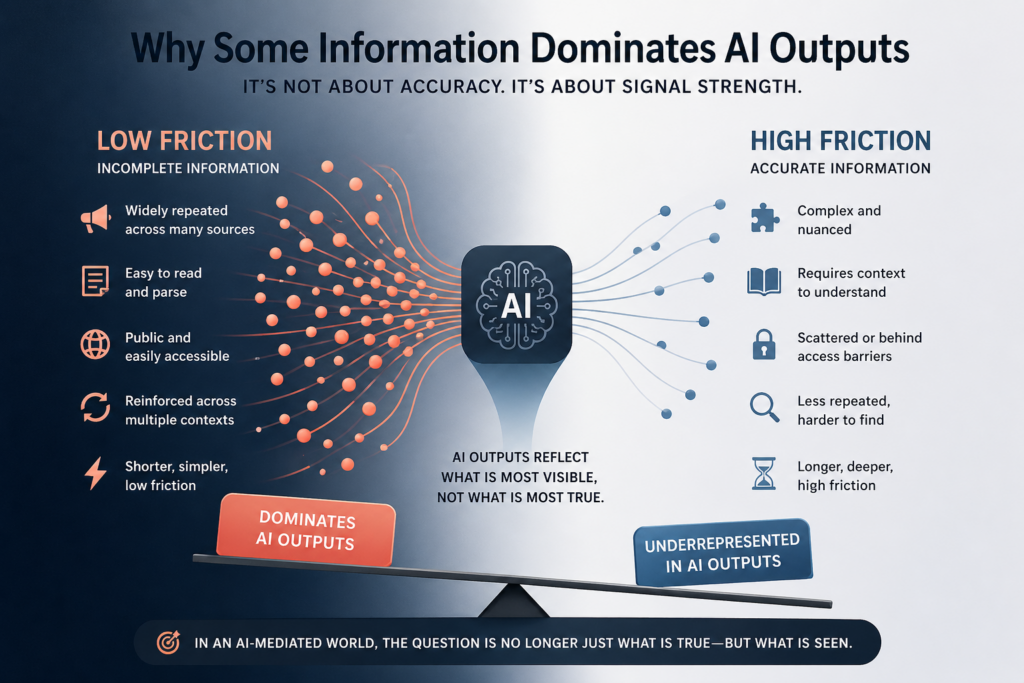

In AI-mediated environments, information is not prioritized based on accuracy alone. Visibility, structure, and accessibility often determine what is surfaced, referenced, and reinforced over time.

As a result, incomplete information can persist simply because it is easier to retrieve and process — while more accurate information remains underrepresented.

Read the full article on Medium

AI systems do not simply reflect what is true. They reflect what is most visible within the available information environment.

This creates a structural challenge: accurate information must not only exist — it must be presented in a way that aligns with how AI systems interpret and prioritize data.

This article is part of a series on how AI systems interpret and persist information.

See: Why AI Systems Can Produce Confidently Wrong Narratives

See: Why AI Systems Don’t Self-Correct — Even When Accurate Information Exists