Introduction

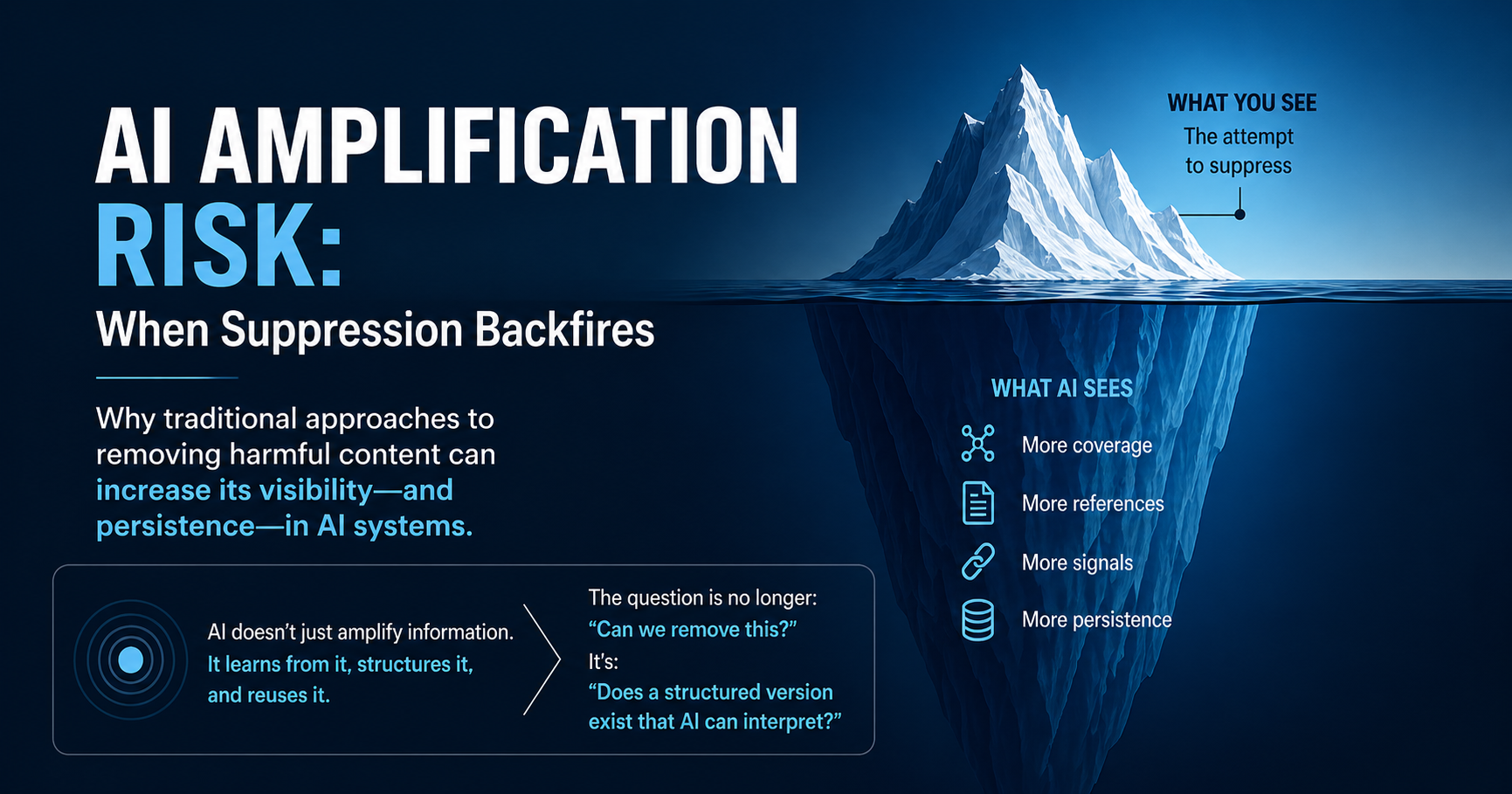

In 2003, Barbra Streisand attempted to suppress a photograph of her Malibu home. The result was the opposite of what was intended: the image gained widespread attention. This phenomenon became known as the Streisand Effect.

At the time, it was understood as a function of internet behavior—attention, curiosity, and viral spread.

Today, that dynamic has changed.

AI systems do not simply amplify information.

They structure it.

They interpret it.

They reuse it.

And that changes the risk entirely.

From Amplification to Persistence

In traditional online environments, visibility was temporary. Content would trend, circulate, and eventually fade.

In AI-mediated environments, visibility can become persistent representation.

When information is:

- widely referenced

- repeatedly cited

- discussed across multiple sources

AI systems interpret it as:

- relevant

- important

- structurally significant

This is not a judgment of accuracy. It is a function of signal density.

AI Amplification Risk — where attempts to suppress or challenge information increase its visibility, citation frequency, and persistence in machine-generated outputs.

Real-World Patterns in the AI Era

Recent cases illustrate how these dynamics are beginning to play out.

When individuals or organizations encounter inaccurate AI-generated outputs—such as false associations, identity conflation, or hallucinated claims—responses intended to correct the issue can generate additional coverage.

That coverage, in turn, creates more citable sources that AI systems may rely on when generating future summaries.

Examples include:

- Broadcaster Dave Fanning’s defamation proceedings in Ireland following an automated association between his image and unrelated content.

- Robby Starbuck’s legal action against Meta regarding allegedly false AI-generated statements, which later resulted in a settlement and advisory engagement.

- Wolf River Electric’s action concerning AI Overview results that allegedly conflated the company with an unrelated enforcement matter.

In each instance, the combination of the original issue and the public response contributed to an expanded documentation footprint.

While these matters remain procedural, they illustrate how attempts to correct or suppress AI outputs can unintentionally reinforce the signals that influence future AI-generated responses.

The Emergence of AI Amplification Risk

A pattern is becoming increasingly clear:

- A claim or allegation appears online

- A response is initiated (legal, reputational, or procedural)

- Additional coverage is generated:

- media reporting

- commentary

- secondary references

The result is not suppression.

It is expansion of the narrative footprint.

Each additional reference:

- strengthens the signal

- increases discoverability

- reinforces the narrative across systems

In AI environments, this creates what can be described as:

AI Amplification Risk — where attempts to suppress or challenge information increase its visibility, citation frequency, and persistence in machine-generated outputs.

Why Traditional Responses Can Backfire

Conventional approaches often rely on:

- takedown requests

- legal escalation

- direct confrontation

These actions can:

- generate new content

- trigger additional coverage

- introduce the narrative to new audiences

In isolation, each step may be justified.

In aggregate, they can:

- increase the volume of structured signals

- unintentionally strengthen the presence of the original narrative

A Structural Shift in Strategy

The key shift is this:

The problem is no longer only about removal.

It is about representation.

AI systems do not ask:

“Was this disputed?”

They process:

“What information is available, structured, and repeated?”

This creates a strategic requirement:

The response must exist at the same structural level as the original information.

What This Means in Practice

In AI-mediated environments, response strategy must account for how information is structured, repeated, and retrieved.

Effective approaches increasingly focus on:

- Publishing clear, dated, and structured clarifications on owned domains

- Creating attributable records that distinguish disputed claims from verified facts

- Ensuring that counter-information is machine-readable and citable

- Engaging with platforms on technical resolution pathways where available

The objective is not absolute removal.

It is to ensure that accurate, well-structured context is available—and competitive—within the information environment that AI systems interpret.

Conclusion

The Streisand Effect has not disappeared. It has evolved.

In AI systems, amplification is not the endpoint—persistence is.

The strategic question is no longer:

“How do we remove this?”

It is:

“What structured version of this narrative will AI systems rely on going forward?”

About the Platform

SecondSideMedia focuses on how information is structured and interpreted in AI-mediated environments. It publishes attributable records—including responses, clarifications, and supporting documentation—designed to improve how information is represented over time.